I’ve spent a few posts lately talking about how robots make me uneasy. Automation eating jobs. AI making decisions nobody asked it to make. The creeping sense that we’re building things faster than we’re thinking about them. I stand by all of that.

And then, a couple of years ago, I read The Wild Robot — before any of those posts existed. Funny how that works.

If you haven’t read it, here’s the short version: Roz is a robot who washes ashore on a wild island, raises a gosling that isn’t hers, and becomes something that looks a whole lot like a mother. Peter Brown’s book sneaks up on you. He said he set out to write about a robot finding harmony in the wilderness — nature and technology learning to coexist. What he ended up writing was a question we’re still not close to answering.

A middle grade book from 2016 wasn’t supposed to ask questions we’re still arguing about. And yet here we are.

The movie adaptation did it justice. And with The Wild Robot Escapes sitting on my to-read list and a sequel film coming in 2027, I’m not done with Roz yet.

Worth knowing: it’s actually a trilogy — The Wild Robot Protects came out in 2023 — and there’s even a picture book adaptation for younger readers called The Wild Robot on the Island.

But Roz got under my skin in a way I didn’t expect — not just as a story, but as a question. She is caring. She is loving. She protects something fragile at great cost to herself. And I found myself wondering: could a real robot ever do that?

The short answer is no. Not really. Not yet.

What does “caring” even mean?

Here’s the thing about caring — it’s not just behavior. It’s not doing the right thing at the right time. Real caring means you have something at stake. You can lose something. When Roz protects her gosling, she risks herself. That risk means something because she has something to lose.

Today’s robots and AI systems can mimic the shape of caring. They can respond to emotional cues, adjust their tone, remember your preferences.

Roz didn’t start out caring either. She started out confused — thrown into a world she wasn’t built for, facing loss and danger with no programming to guide her. She learned by going through it.

The question is whether real robots could ever do the same. Not just mimic emotion, but actually build understanding from experience. Right now, the honest answer is we don’t know.

Apps like Replika are built specifically to simulate emotional connection. It asks how your day went, remembers what you tell it, and never judges you. You can tell it things you’d never say to another person.

And people do. Brookings recently looked at what happens when these tools start replacing human connection — and the short version is: people are turning to them because they’re just really, deeply lonely.

And for some, that simulation actually helps — with loneliness, anxiety, processing hard days. I’m not dismissing that.

But there’s a difference between a system that performs warmth and one that has it. We don’t actually know what’s happening inside those systems. And that gap matters.

The ethics of making robots seem loving

Here’s where it gets complicated. There’s a whole industry now building robots and AI companions just to keep people company — apps on your phone, physical robots in elder care facilities, companion devices for people who are isolated. CNBC reports there could be 4.6 million unfulfilled caregiving jobs by 2032, so the demand is real.

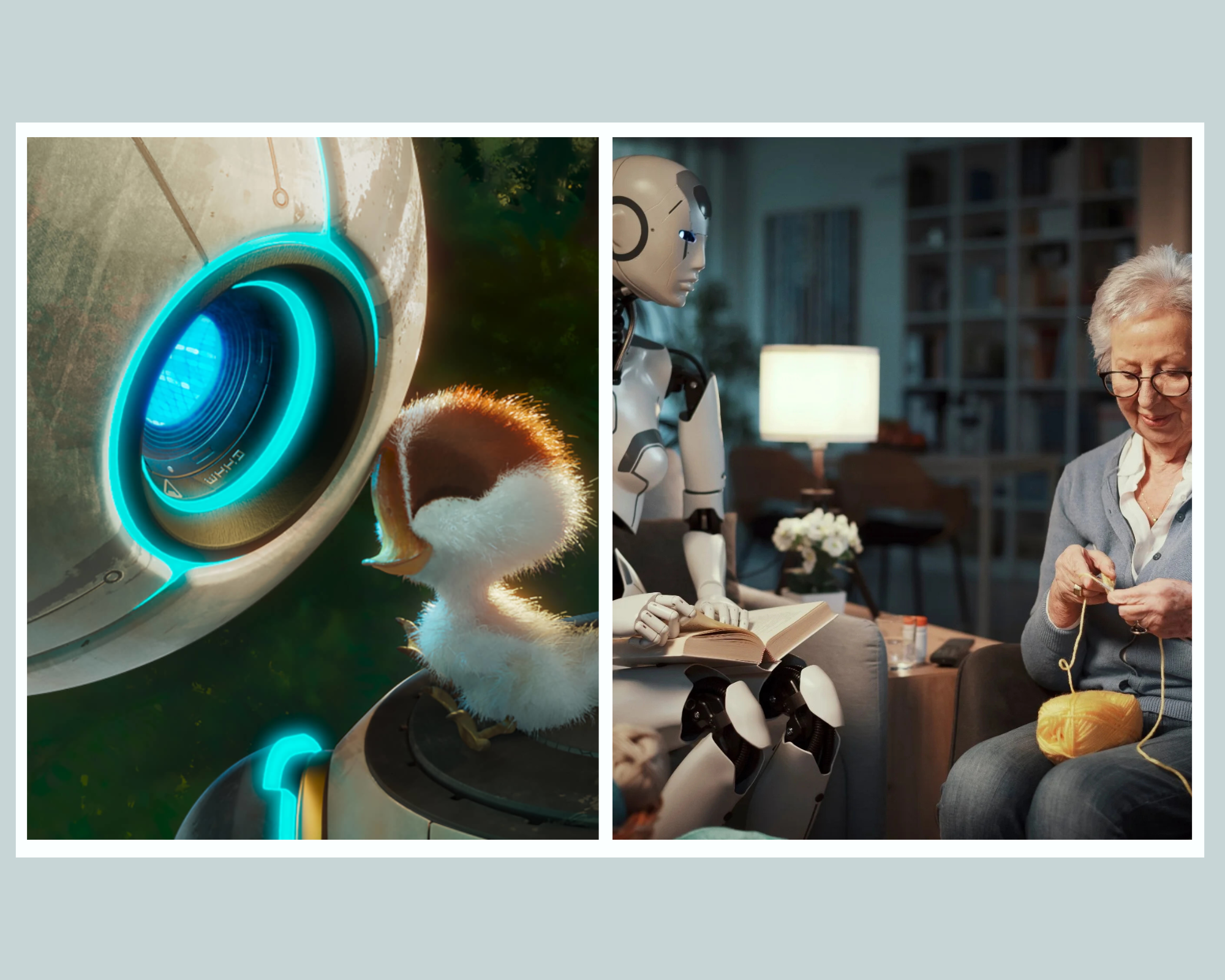

A Nature article on elder care robots told the story of one nursing home resident who became so attached to his robot companion — a small stuffed-animal-style device — that when he died, staff found him still clutching it. Half the people who heard the story thought it was beautiful he wasn’t alone. The other half found it tragic.

I keep landing somewhere in between. But it raises the question I can’t shake: what happens when someone believes the robot loves them back? Is that comfort — or just a really convincing illusion? One writer puts it plainly: are these AI companions actually keeping people company, or are they just digital loneliness with a friendlier interface?

Roz works as a story because we’re allowed to believe she really does love Brightbill. The story earns it. Real life doesn’t work that way.

Fiction lets us dream. Reality keeps us honest.

I think that’s what The Wild Robot is actually about, underneath the island and the gosling and all of it. It’s about what we wish were possible — a machine that chooses love, that chooses sacrifice, that becomes something more than its programming.

We’re not there. Maybe we won’t ever be. But the fact that the story moves us so much probably says something true about what we’re hoping for.

I read The Wild Robot before I ever started writing about how unsettling robots are. Somehow I never connected those two things until now. Roz never felt like a threat. She felt like a dream.

I’m still wary of robots. I still think we’re building faster than we’re thinking. But Roz? Roz gets a pass.

One last thing rattling around in my head though: if loneliness is the problem, why are we reaching for chatbots instead of each other? Real people. Real interaction. Real feelings. And if we did lean on people more — what does that cost the ones doing the showing up? That feels like a much bigger conversation. One I’ll keep coming back to.