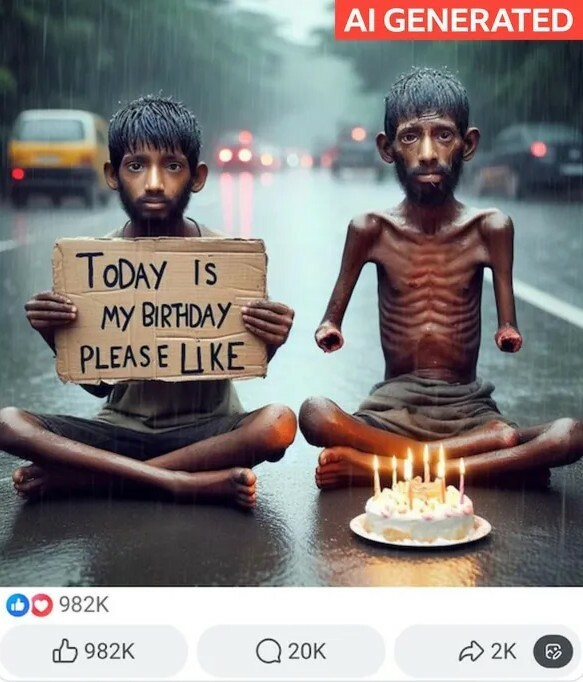

Look at this image below. Really look at it.

Image Description:Two emaciated South Asian children, sitting in the middle of a busy road in pouring rain. A birthday cake in front of them. Despite their young faces, both have thick beards. One has no hands and only one foot. The other is holding a sign asking for birthday likes.

On Facebook, it got nearly a million likes and heart emojis.

That image is what pushed Théodore, a 20-year-old student in Paris, to start an X account called "Insane AI Slop" to document what he was seeing everywhere. And what he was seeing wasn't random. It was a pattern. Fake images designed to pull at your heart fast enough that you react before you think. And it's working.

"Slop oozes into everything. Like slime, sludge, and muck, slop has the wet sound of something you don't want to touch."

— Merriam-Webster, naming "slop" its 2025 Word of the Year

What We're Actually Talking About

Some AI stuff is fine. This isn't about that. Slop is reaction-bait. Attention is optional. It's churn. Post, spike, disappear. Next one.

I see it constantly. On Facebook and X, I come across AI-generated movie posters for sequels that don't exist, films supposedly coming in 2026 with nothing in production anywhere. I've looked them up. Nothing. Political images are everywhere too, crafted to look authoritative, designed to provoke a reaction before you think to question them.

There's a temptation to say this isn't new. Political satire and caricature have existed forever. But that comparison doesn't really hold up. A cartoon signals what it is. A caricature announces itself. This stuff carries no such signal. It's built to pass. That's what makes it different from anything that came before.

And it's not a niche problem. Merriam-Webster, the American Dialect Society, and the Macquarie Dictionary all named "slop" their 2025 Word of the Year. When multiple dictionaries land on the same word, something real is being named. Washington Post critic Ron Charles put it this way:

"AI promised us miracles, and in a way, it has delivered them: fake images, Frankensteined videos, phony news, clickbait features, synthetic tunes, uncanny-valley podcasts and Cylon-composed books — all untouched by human hands or human intelligence. In a word: Slop."

Research from Kapwing found that more than 20% of content shown to a freshly opened YouTube account is already low-quality AI video. Of the first 500 YouTube Shorts shown to a new account, 104 were AI slop. The top AI slop channel on YouTube, India's Bandar Apna Dost, has racked up 2.4 billion views and earns its creators an estimated $4 million a year. This isn't happening in the background. It is the feed.

The Platforms Are Not Going to Fix This

When Mark Zuckerberg told investors during his Q3 2025 earnings call that social media had entered its "third phase," he wasn't sounding an alarm. He was celebrating. First came friends and family. Then creators. Now, he said, comes AI.

The feed doesn't have taste. It has one question: did you stop scrolling? Both get clicks. Both keep people scrolling. And keeping people scrolling is the only thing these companies actually care about.

YouTube's CEO Neal Mohan acknowledged the problem in a January 2026 blog post, promising better systems to reduce low-quality content. Then in the same post compared AI tools to Photoshop. Pinterest rolled out an opt-out for AI content — except it only works if creators actually admit their stuff is fake. Meta and X have gutted their moderation teams and basically shrugged the whole thing back onto users.

Nothing's broken. This is the machine doing exactly what it was built to do. That's the part that gets me. Engagement is engagement whether the content is real or not, and that's what pays the bills. Until the economics change, there's genuinely no reason for any of it to slow down.

"It really does start to blur the boundaries, and it makes people feel like this AI slop is inescapable if you are going to be online."

Why This Is More Than Just Annoying

The annoying part is the least of it.

There's a researcher at the University of Padova named Alessandro Galeazzi who studies social media behavior, and he makes a point I keep coming back to. Figuring out if something is real takes actual mental effort. Do that hundreds of times a day across every app you open, and most people eventually just... stop. He calls it the "brain rot" effect — basically, a slow wearing down of your willingness to think critically about what you're looking at.

Emily Thorson, a misinformation researcher at Syracuse University, adds something to that. When a platform is used purely for entertainment, the only standard that matters is whether something is enjoyable, not whether it's true. In that environment, truth doesn't lose the argument. It just stops being part of the conversation.

The political stakes aren't hypothetical. After the US operation in Venezuela in January 2026 that removed Nicolás Maduro, fabricated videos started flooding social media almost immediately. Venezuelans cheering in the streets, thanking the US. One clip got 5.6 million views before Elon Musk reshared it — and then quietly deleted it. NewsGuard tracked seven fabricated videos from that week alone, and combined they'd cleared 14 million views on X in under 48 hours. Just on X. Fourteen million people saw a version of events that didn't happen, and most of them probably have no idea. Doesn't surprise me one bit.

Dr. Manny Ahmed, founder of OpenOrigins and a Cambridge PhD who built one of the earliest deepfake detectors, says we've already crossed a line: you can't trust your own eyes anymore. His idea flips the whole thing around. Instead of chasing fakes after they've already spread, he thinks we need systems baked in from the moment content is captured — something that lets real content prove it's real, right from the source. It's a pretty radical shift, and the fact that someone with his background is pushing for it says a lot about how far gone things already are.

"Everything that made creators matter — the ability to be real, to connect, to have a voice that couldn't be faked — is now suddenly accessible to anyone with the right tools."

— Adam Mosseri, Head of Instagram, December 31, 2025

So Where Does This Leave Us?

Théodore eventually mostly stopped posting. He built 133,000 followers, flagged disturbing content, got some of it removed by YouTube, and then largely accepted what he now calls the new normal.

"Unlike a lot of my followers," he told the BBC, "I'm not dogmatically against AI. I'm against the pollution online of AI slop that's made for quick entertainment and views."

And I think he's got it right. The problem was never AI itself. It's what happens when the internet's whole economic model runs on volume and speed and reaction — truth is just kind of an afterthought. AI didn't create that problem. It just made it cheaper, faster, and a whole lot bigger than anything we've dealt with before.

Could something emerge that bets on authenticity instead? It's possible. BeReal tried it — one unfiltered photo per day, no editing, no filters, two minutes to capture whatever you were actually doing. It hit number one on the US App Store in 2022 and won Apple's iPhone App of the Year, made the bigger platforms nervous enough that they copied its format, and then faded. AI detection is now getting harder, not easier. The machines built to spot synthetic content can't keep pace with the machines generating it.

So what's the internet actually for now? I genuinely don't know anymore. If the answer is just... entertainment, whatever it takes, then great — the platforms have already built exactly that. But if you want something that feels real, or actually connects you to other people, or gives you a version of the world you can trust — then what's going on right now isn't a glitch. We did this. Not just the platforms, not just the algorithms. Every time we clocked something, it was off, and we scrolled right past it anyway.

Here's where I land on it: AI isn't going anywhere, and I'm totally okay with that. What I'm not okay with is nobody being held responsible for any of it. Label it. Every piece of this stuff, every platform, no exceptions. Not some opt-in checkbox that only honest people use, not a disclaimer buried three clicks deep — an actual label, right there, visible. Will that fix everything? No. But it changes who's on the hook. Online, real and fake start at the same place. You only find out which is which after it's already spread. That's backwards. We already figured this out in other places — you know what's in your food, you know what side effects come with your medication. Why does posting a fake video online get to be the one thing we don't have to disclose?

The engagement numbers suggest most people haven't decided they want something different yet. But numbers measure behavior, not belief. And there's a real gap growing between what people click on and what they say they actually trust.

Worth paying attention to.

Until next week.